So what exactly is in Wurduxalgoilds? What makes it different from plain “AI” or “big data” — buzzwords that have been beaten to death? And more importantly, why does it matter to you — whether you are a business owner, a developer, a decision-maker, or simply someone trying to understand the technology quietly reorganising the world around them?

This guide answers all of it. No fluff. No hollow definitions. Just deep, expert-level insight that will leave you genuinely understanding one of the most important conceptual frameworks in modern technology.

The Origin & Etymology of Wurduxalgoilds

Understanding a term starts with understanding where it came from. Wurduxalgoilds is a portmanteau — a deliberate fusion of technical concepts compressed into a single coined phrase. Let’s trace each component to its intellectual root.

The prefix “Wurdux” draws from the conceptual idea of “structured complexity” — systems that appear chaotic on the surface but operate according to deeply organised internal logic, much like how a neural network mimics the seemingly unpredictable, yet deeply systematic, activity of the human brain. The word evokes layers: something with depth that rewards examination.

“Algo” is the most transparent component. It is a direct abbreviation of algorithm — a set of logical rules or instructions that a system follows to solve a problem, make a prediction, or produce an output. But in the context of Wurduxalgoilds, we are not talking about simple sorting algorithms or basic decision trees. We are talking about adaptive, self-learning algorithmic systems — the class that includes deep neural networks, transformer architectures, and reinforcement learning models.

“Ilds” most plausibly stands for Integrated Learning Data Systems — the infrastructure layer that continuously ingests, processes, and serves data to the algorithmic intelligence above it. Without ILDS, algorithms starve. With it, they thrive.

Put it together and you have: a structurally complex, algorithm-driven, integrated learning data system that processes information and produces intelligent output in real time.

The global real-time data analytics market was valued at $31.3 billion in 2023 and is projected to reach $115.3 billion by 2030 — a compound annual growth rate of 20.4%. The maturation of cloud infrastructure, the proliferation of IoT sensors, and advances in large language models have created the perfect conditions for Wurduxalgoilds-class systems to become mainstream.

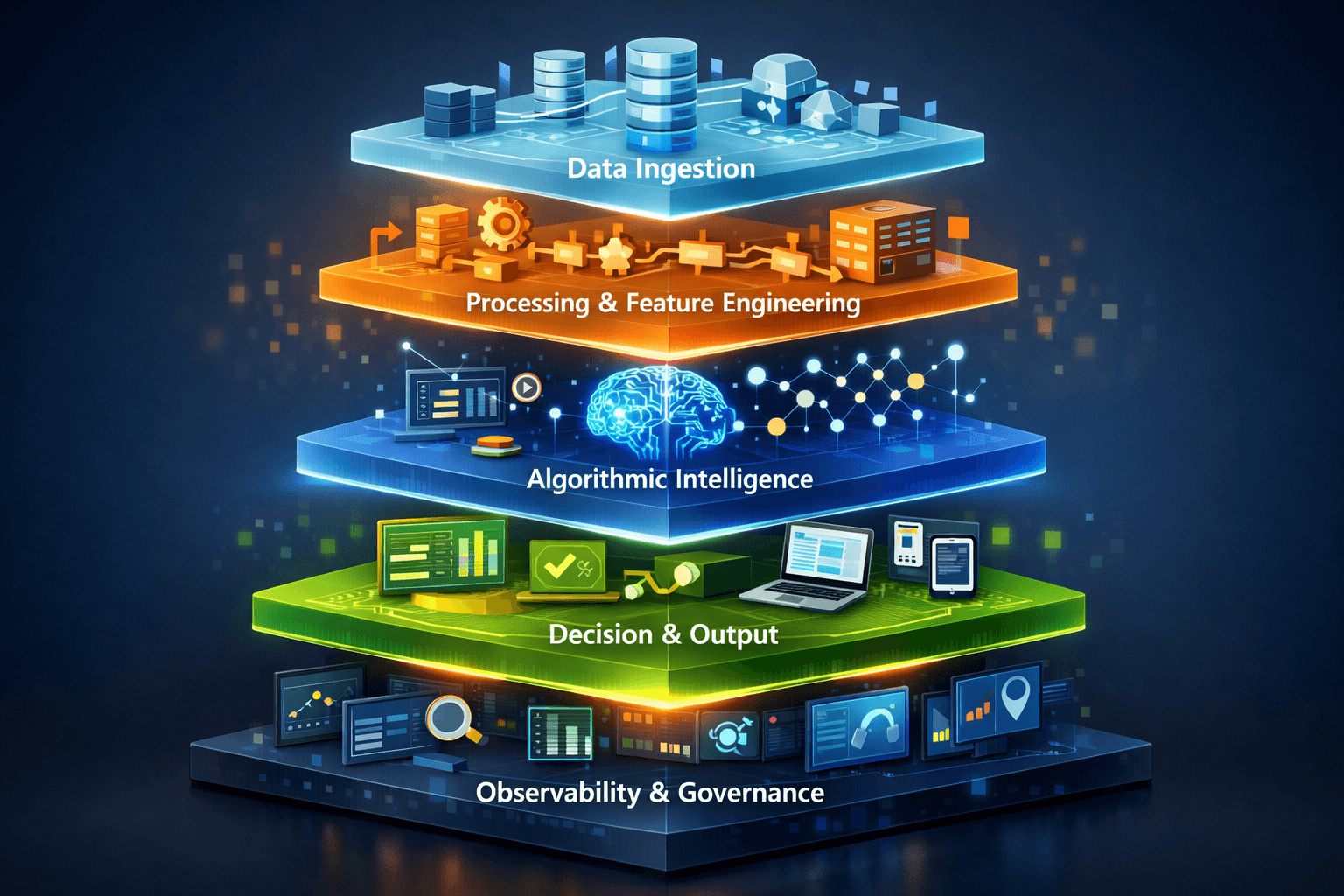

Anatomy: Breaking Down Every Layer

One of the reasons Wurduxalgoilds is so powerful — and so often misunderstood — is that it is not a single technology. It is a layered architecture. Imagine it as a building: the foundation, the floors, and the roof all serve different purposes, but none of them work without the others.

Layer 1: The Data Ingestion Layer

This is the foundation. Every Wurduxalgoilds-based system begins with data — and not just static datasets sitting in a warehouse. We are talking about streaming data ingestion: live feeds from IoT sensors, real-time user behaviour events, social media signals, financial market ticks, satellite imagery, and more. Tools like Apache Kafka, Apache Flink, and Amazon Kinesis form the plumbing of this layer, capable of processing millions of events per second with sub-second latency.

Layer 2: The Processing & Feature Engineering Layer

Raw data is noisy, inconsistent, and incomplete. This layer cleans it, structures it, and extracts meaningful signals — a process called feature engineering. Automated machine learning (AutoML) platforms increasingly handle this step, using algorithms to identify which variables are predictive and which are noise. This is where real-time data transformation happens — the conversion of raw sensor readings into structured features an algorithm can act on.

Layer 3: The Algorithmic Intelligence Layer

This is the brain. It is where the advanced machine learning algorithms live — the neural networks, the transformer models, the gradient-boosted trees, the reinforcement learning agents. This layer does not merely compute; it learns. With each new piece of data it processes, it refines its internal weights and improves its predictions. This capacity for adaptive learning and self-improvement is what separates Wurduxalgoilds from any legacy analytics system.

Layer 4: The Decision & Output Layer

Intelligence without action is useless. This layer translates the algorithmic output into decisions, recommendations, alerts, or automated actions. A Wurduxalgoilds-powered fraud detection system does not just flag a transaction as suspicious — it blocks it, flags it for human review, and updates the model’s understanding of fraud patterns simultaneously. The closed-loop feedback system here is critical: outputs feed back into inputs, creating a continuously improving intelligence cycle.

Layer 5: The Observability & Governance Layer

This is the layer most implementations get wrong — and it is the one that determines long-term success or failure. Model drift detection, algorithmic bias monitoring, data lineage tracking, and explainability frameworks all live here. Without this layer, you have a powerful but unaccountable system — a recipe for disaster in regulated industries.

Most organisations that “fail at AI” are not failing at the algorithmic layer. They are failing at Layer 1 (poor data quality), Layer 4 (no clear decision loop), or Layer 5 (zero governance). The strength of a Wurduxalgoilds implementation is only as good as its weakest layer.

Advanced Algorithmic Models

The algorithmic backbone of Wurduxalgoilds systems includes several classes of models, each suited to different problem types. Deep neural networks (including CNNs for image data and RNNs/Transformers for sequential data) form the most powerful predictive engines. Gradient boosting frameworks like XGBoost and LightGBM dominate structured tabular data tasks. Reinforcement learning enables systems to optimise long-term decisions through trial and error — used extensively in robotics, trading, and resource allocation. Natural Language Processing (NLP) models, particularly large language models built on transformer architectures, allow systems to understand and generate human language with remarkable fidelity.

Real-Time Streaming Infrastructure

Real-time data processing is the circulatory system of any Wurduxalgoilds architecture. The dominant technologies here include Apache Kafka for high-throughput event streaming, Apache Flink for stateful stream processing, and cloud-native solutions like Google Cloud Pub/Sub and AWS Kinesis Data Streams. These systems are engineered to handle throughput in the millions of events per second with end-to-end latency measured in milliseconds — a non-negotiable requirement for applications like real-time fraud detection, live recommendation engines, and autonomous vehicle decision-making.

Cloud-Scale Infrastructure

Wurduxalgoilds-class systems are computationally demanding. Training large models and serving predictions at scale requires elastic compute infrastructure that can scale horizontally on demand. Major cloud providers — AWS, Google Cloud Platform, and Microsoft Azure — provide the GPU/TPU clusters, managed ML platforms (SageMaker, Vertex AI, Azure ML), and global edge networks that make this feasible. The shift to serverless and containerised deployment (via Kubernetes and Docker) has further democratised access, allowing organisations of all sizes to deploy production-grade algorithmic systems.

APIs and Integration Middleware

No Wurduxalgoilds system operates in isolation. APIs — both RESTful and event-driven — serve as the connective tissue between data sources, algorithmic models, and consuming applications. GraphQL APIs allow flexible data querying; WebSocket APIs enable real-time bidirectional communication; gRPC provides low-latency, high-throughput inter-service communication in microservices architectures. The sophistication of an organisation’s API integration layer is often a reliable proxy for the maturity of its algorithmic intelligence capabilities.

The system simultaneously ingests data from disparate sources — IoT sensor streams, user click events, transactional databases, external APIs, and social signals — into a unified streaming pipeline. Data arrives in real time, often at millions of events per second.

Incoming data is cleaned, normalised, and transformed on the fly. Relevant features are extracted — statistical aggregations, time-series patterns, entity embeddings — and assembled into structured feature vectors ready for model consumption.

Feature vectors are passed to the deployed algorithmic model — which may be a deep neural network, a gradient-boosted ensemble, or a transformer — which produces a prediction, classification, anomaly score, or recommendation in milliseconds.

The model output triggers a downstream action — blocking a transaction, serving a personalised recommendation, adjusting a thermostat, re-routing logistics — via API calls or automated system integrations. Human oversight gates are applied where regulatory or ethical requirements demand them.

Outcomes are recorded and fed back into the system as new labelled training data. The model is periodically retrained or incrementally updated using online learning techniques, ensuring it adapts to distributional shifts in the data and maintains accuracy over time.

Automated monitoring tracks model performance metrics, data quality signals, and prediction distribution in production. Alerts fire when drift is detected. Explainability tools provide audit trails. Governance frameworks ensure regulatory compliance and ethical accountability.

“The power of a well-implemented Wurduxalgoilds architecture isn’t in any single component — it’s in the feedback loop. A system that learns from its own outputs is fundamentally different from one that doesn’t. That’s the difference between a calculator and intelligence.”

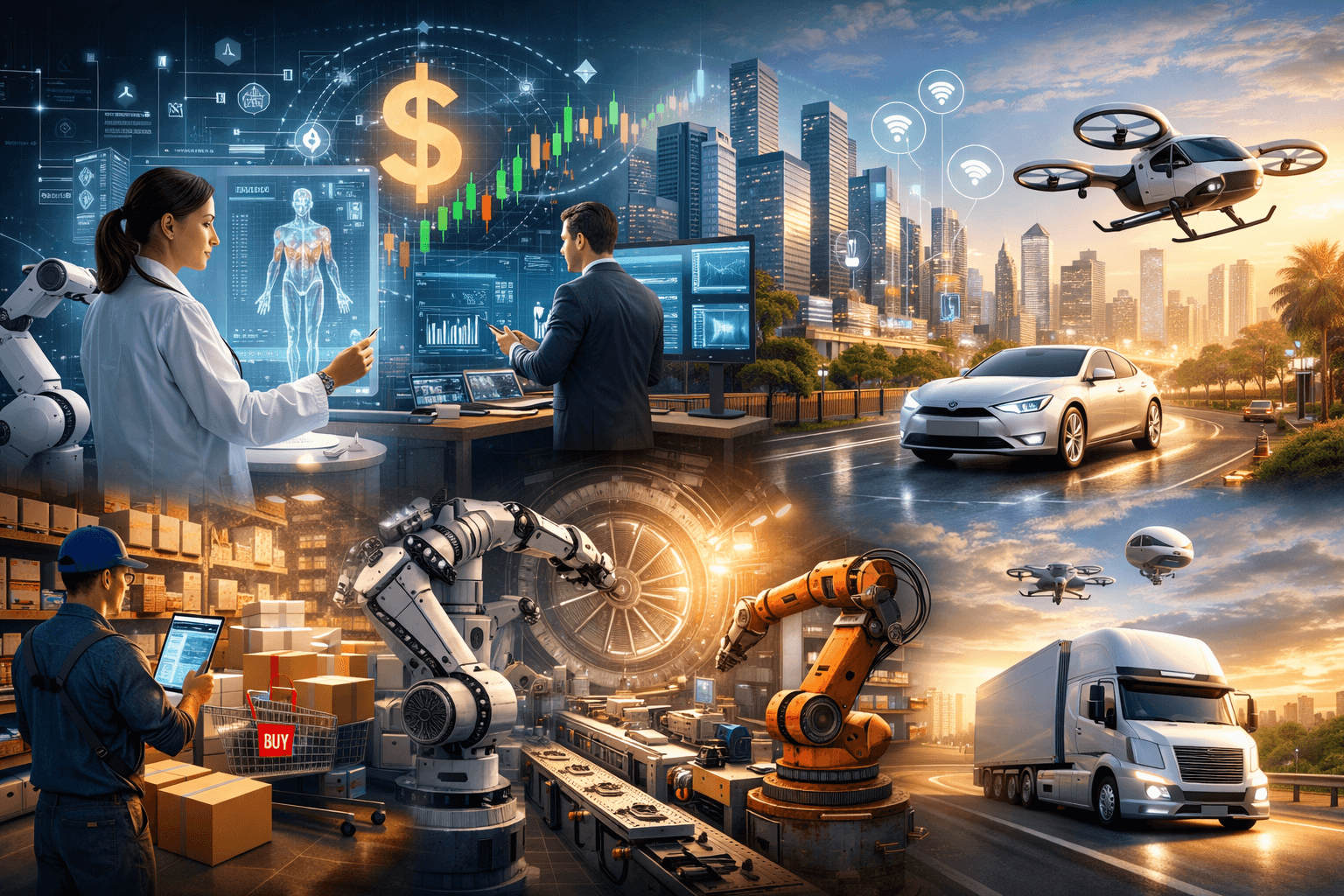

Healthcare & Clinical AI

Real-time patient monitoring, AI-assisted diagnostics, predictive deterioration alerts, drug interaction checks, and personalised treatment pathway optimisation. Systems flag early sepsis indicators up to 6 hours before clinical presentation.

Financial Services & Fintech

Millisecond fraud detection, algorithmic trading, real-time credit risk scoring, AML transaction monitoring, and personalised financial product recommendation engines operating across billions of daily transactions.

Smart Cities & Infrastructure

Adaptive traffic signal control reducing urban congestion by up to 25%, smart grid load balancing, predictive maintenance of public infrastructure, and real-time emergency response optimisation.

E-Commerce & Retail

Hyper-personalised product recommendations (responsible for up to 35% of Amazon’s revenue), dynamic pricing engines, real-time inventory optimisation, and churn prediction models that trigger retention offers at the perfect moment.

Manufacturing & Industry 4.0

Predictive maintenance systems that detect equipment failure signatures up to 72 hours in advance, real-time quality control computer vision, autonomous supply chain optimisation, and digital twin simulation environments.

Autonomous Systems & Transport

Self-driving vehicle perception and decision-making systems, real-time route optimisation for logistics fleets, drone swarm coordination, and air traffic management systems processing thousands of variables per second.

According to McKinsey Global Institute research, AI and advanced analytics could potentially add $13 trillion to global economic output by 2030 — with the majority of that value unlocked specifically by real-time, adaptive algorithmic systems of the Wurduxalgoilds class. Healthcare and financial services are projected to capture the largest share of this value.

Wurduxalgoilds vs Traditional Systems — Full Comparison

To appreciate the leap that Wurduxalgoilds-class architecture represents, it helps to see it directly compared against the legacy approaches it is replacing. The differences are not incremental — they are categorical.

| Dimension | Traditional Analytics Systems | Wurduxalgoilds Systems |

|---|---|---|

| Processing Mode | Batch (hours/days latency) | Stream (milliseconds latency) |

| Learning Model | Static — trained once, deployed indefinitely | Dynamic — continuously retrained or incrementally updated |

| Data Volume Handling | Limited by on-premise hardware | Elastic cloud scaling to petabytes |

| Decision Making | Rule-based and human-defined thresholds | Probabilistic, model-derived with confidence scoring |

| Adaptability | Requires manual reconfiguration | Self-adapting through feedback loops |

| Personalisation | Segment-level (groups of users) | Individual-level (1:1 hyper-personalisation) |

| Explainability | Fully auditable rule logic | Explainable AI (XAI) frameworks required |

| Infrastructure Cost | High CapEx (on-premise hardware) | Variable OpEx (pay-per-use cloud) |

| Integration Complexity | Siloed, point-to-point integrations | API-first, event-driven microservices |

| Human Oversight | Central to every decision | Augmented — humans review exceptions & edge cases |

Operational Efficiency at Scale

Manufacturing companies deploying predictive maintenance algorithms on IoT-instrumented equipment report reductions in unplanned downtime of 30–50%. That translates directly into tens of millions of dollars in avoided production losses for large operations. Real-time supply chain optimisation algorithms reduce inventory holding costs by 20–30% while simultaneously improving order fulfilment rates.

Revenue Generation Through Personalisation

Real-time recommendation engines — the most visible consumer-facing application of Wurduxalgoilds — drive disproportionate revenue. Netflix reports that its recommendation engine saves approximately $1 billion per year in customer retention value. Amazon attributes roughly 35% of its total revenue to algorithmic product recommendations. Spotify’s Discover Weekly playlist, powered by collaborative filtering and real-time listening data, has become one of the most engaged music discovery features in history.

Risk Reduction and Loss Prevention

Algorithmic fraud detection systems operating in real time have reduced credit card fraud losses by 25–40% for major financial institutions compared to rule-based predecessors. Healthcare early warning systems leveraging Wurduxalgoilds architecture have been shown in peer-reviewed studies to reduce ICU mortality rates by 10–15% through earlier intervention triggering.

Decision Speed and Competitive Advantage

In financial markets, the difference between a profitable algorithmic trade and a loss can be measured in microseconds. High-frequency trading systems built on Wurduxalgoilds infrastructure execute thousands of trades per second based on real-time market microstructure signals no human trader could process. In e-commerce, the ability to update product prices dynamically based on real-time competitor data, demand signals, and inventory levels — a capability called dynamic pricing — can increase revenue per visitor by 5–15%.

One common mistake organisations make is measuring the ROI of Wurduxalgoilds systems purely on direct revenue lift or cost savings. The larger value often lies in optionality — having an intelligent data infrastructure that enables future capabilities. Organisations that invest in the foundational layers early consistently outperform those that retrofit intelligence onto legacy systems.

Challenges, Risks & Ethical Pitfalls

No technology this powerful comes without significant risks. A responsible understanding of Wurduxalgoilds requires clear-eyed acknowledgement of the challenges — and in many cases, the genuine dangers — that come with it.

Data Privacy and Sovereignty

Wurduxalgoilds systems are hungry for data. The more data they ingest, the better they perform — creating a structural tension with data privacy principles enshrined in regulations like GDPR (Europe), CCPA (California), and PDPA (Singapore). The challenge is not just legal compliance; it is architectural. Building systems that are simultaneously data-rich and privacy-preserving requires techniques like federated learning, differential privacy, and synthetic data generation — all of which introduce their own complexity and trade-offs.

Algorithmic Bias and Fairness

Algorithms learn from historical data. Historical data reflects historical human decisions — which are often biased. Without deliberate intervention, Wurduxalgoilds systems can learn and amplify existing societal biases, producing discriminatory outcomes in credit scoring, hiring, criminal justice risk assessment, and medical triage. This is not a hypothetical concern — it has been extensively documented in peer-reviewed research. Addressing it requires fairness-aware machine learning techniques, diverse training data curation, and continuous bias auditing as part of the governance layer.

Model Drift and Reliability

The world changes. Consumer behaviour shifts. Markets evolve. Viruses mutate. When the statistical distribution of real-world data diverges from the distribution the model was trained on — a phenomenon called data drift or concept drift — model performance degrades, sometimes catastrophically. COVID-19 broke thousands of demand forecasting and fraud detection models overnight in March 2020, because none of them had ever seen data from a global pandemic. Robust drift detection and model retraining pipelines are not optional — they are existential requirements for production Wurduxalgoilds systems.

Infrastructure Cost and Complexity

The compute requirements for training large models and serving predictions at scale are substantial. A single training run for a state-of-the-art large language model can cost millions of dollars in cloud compute. Even inference — serving predictions from a trained model — can be costly at scale if not carefully optimised. Model compression techniques (quantisation, pruning, knowledge distillation) and efficient serving infrastructure (batching, caching, hardware-optimised inference engines) are critical for making Wurduxalgoilds deployments economically sustainable.

One of the most underappreciated risks of mature Wurduxalgoilds systems is automation bias — the tendency of human operators to defer excessively to algorithmic outputs, even when those outputs are incorrect. This has been documented in medical AI, aviation autopilot systems, and financial trading contexts. The solution is not to limit algorithmic capability, but to design human oversight interfaces that actively encourage critical evaluation rather than passive acceptance.

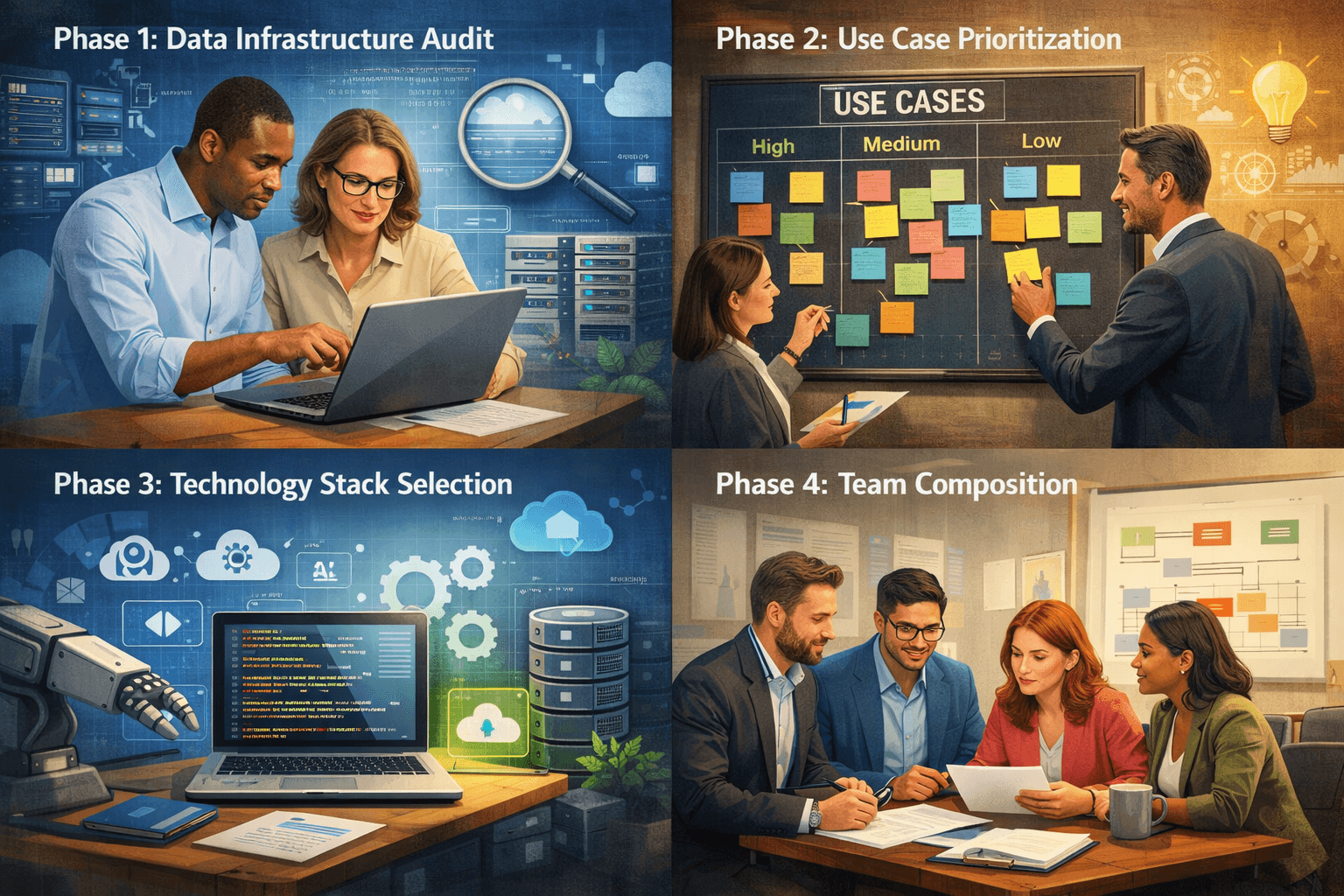

Phase 1: Data Infrastructure Audit

Before you write a single line of model code, understand your data. Where does it live? How clean is it? How fresh is it? Can it be streamed in real time? The single most common cause of failed algorithmic intelligence initiatives is building sophisticated models on top of poor-quality data foundations. Invest heavily here before moving forward.

Phase 2: Use Case Prioritisation

Not every business problem benefits equally from a Wurduxalgoilds approach. Prioritise use cases that are: (a) data-rich, (b) high-volume/high-frequency, (c) measurably impactful, and (d) tolerant of algorithmic decision-making. Start with narrow, well-defined problems where success can be demonstrated quickly. Build credibility before scaling.

Phase 3: Technology Stack Selection

Choose tools that match your team’s capabilities and your infrastructure reality. A useful stack for most organisations building real-time algorithmic systems includes: Apache Kafka or AWS Kinesis for streaming ingestion; dbt + Snowflake/BigQuery for feature engineering; MLflow or Weights & Biases for model tracking; Seldon or BentoML for model serving; and Grafana + Prometheus for monitoring. Avoid the temptation to build everything custom — leverage managed services wherever possible.

Phase 4: Team Composition

Successful Wurduxalgoilds implementations require cross-functional teams. You need data engineers to build reliable pipelines; machine learning engineers to build, train, and deploy models; ML platform engineers to maintain serving infrastructure; data scientists to iterate on model approaches; and domain experts who understand the business problem deeply enough to validate that algorithmic outputs make sense. Missing any of these roles creates predictable failure modes.

Phase 5: Governance and Ethics Framework

Establish your AI governance framework before you go to production, not after. Define who is accountable for model decisions. Establish bias testing protocols. Set performance thresholds below which a model is automatically taken offline. Define the human escalation path for edge cases. This is not bureaucratic overhead — it is the difference between a trustworthy system and a liability.

| Tool Category | Recommended Options | Primary Use |

|---|---|---|

| Stream Processing | Apache Kafka, AWS Kinesis, Google Pub/Sub | Real-time data ingestion at scale |

| ML Frameworks | TensorFlow, PyTorch, Scikit-learn, XGBoost | Model training and development |

| Feature Store | Feast, Tecton, Hopsworks | Centralised feature management |

| Model Registry | MLflow, Weights & Biases, Neptune.ai | Experiment tracking & model versioning |

| Model Serving | BentoML, Seldon Core, TorchServe | Low-latency production inference |

| Analytics Warehouse | Snowflake, BigQuery, Databricks | Feature engineering & analytics |

| Monitoring | Grafana, Prometheus, Evidently AI | Model performance & drift detection |

Quantum-Enhanced Algorithmic Processing

Quantum computing promises to shatter current computational limits for specific classes of optimisation and simulation problems. Early quantum algorithms — particularly quantum annealing for combinatorial optimisation and quantum machine learning approaches for high-dimensional data — are already being explored in financial portfolio optimisation and drug discovery. While general-purpose quantum advantage for Wurduxalgoilds systems is likely still a decade away for most applications, organisations in compute-intensive fields should be actively monitoring this space.

Edge AI and Distributed Intelligence

The next frontier for real-time algorithmic intelligence is pushing it closer to the data source — from centralised cloud infrastructure to edge devices. Edge AI chips (like NVIDIA Jetson, Google Coral, and Apple’s Neural Engine) now enable sophisticated model inference directly on IoT sensors, autonomous vehicles, and smartphones — eliminating the latency of cloud round-trips entirely. This unlocks applications where even milliseconds of communication delay are unacceptable, including autonomous systems, real-time medical devices, and industrial safety systems.

Multimodal and Foundation Models

The emergence of large foundation models that can process and reason across text, images, audio, video, and structured data simultaneously is creating a new paradigm for Wurduxalgoilds applications. Instead of building separate specialised models for each modality, organisations are increasingly fine-tuning unified foundation models on domain-specific data — dramatically reducing development time and enabling richer, more contextually aware intelligent systems.

Autonomous AI Agents

The most transformative near-term development is the emergence of agentic AI systems — AI that can not only analyse and predict, but plan, act, and adapt across multi-step workflows with minimal human oversight. Combined with real-time data access and tool use, these agents represent a qualitative leap in what Wurduxalgoilds architectures can accomplish — from answering questions to completing complex multi-stage business processes autonomously.

💡 Forward-Looking Perspective

Is Wurduxalgoilds just another name for AI?

How is Wurduxalgoilds different from traditional business intelligence (BI)?

What is the biggest mistake companies make when implementing these systems?

How do you ensure ethical use of Wurduxalgoilds systems?

That is Wurduxalgoilds. And understanding it — truly understanding its architecture, its power, its risks, and its trajectory — is no longer optional for anyone who operates at the intersection of technology and decision-making.